Organizations often use several applications to run their business operations effectively. Data generated from these applications are collected using Kafka for analytics or other downstream processes. You can use Kafka as the backbone of an event-driven architecture for processing and analyzing large volumes of real-time data. For organizations seeking to leverage this data for analytics, Kafka integration with data warehouses like Snowflake becomes crucial. By connecting Kafka to Snowflake, organizations can centralize event-style data from applications and harness the power of Snowflake's analytics capabilities.

This guide explores how you can connect Kafka to Snowflake, focusing on using the Kafka to Snowflake connector and alternative methods for streaming from Kafka to Snowflake efficiently.

What is Kafka?

Apache Kafka is an open-source, distributed event-streaming platform used for publishing and subscribing to streams of records. It plays a crucial role in sending data from Kafka to Snowflake for real-time analytics.

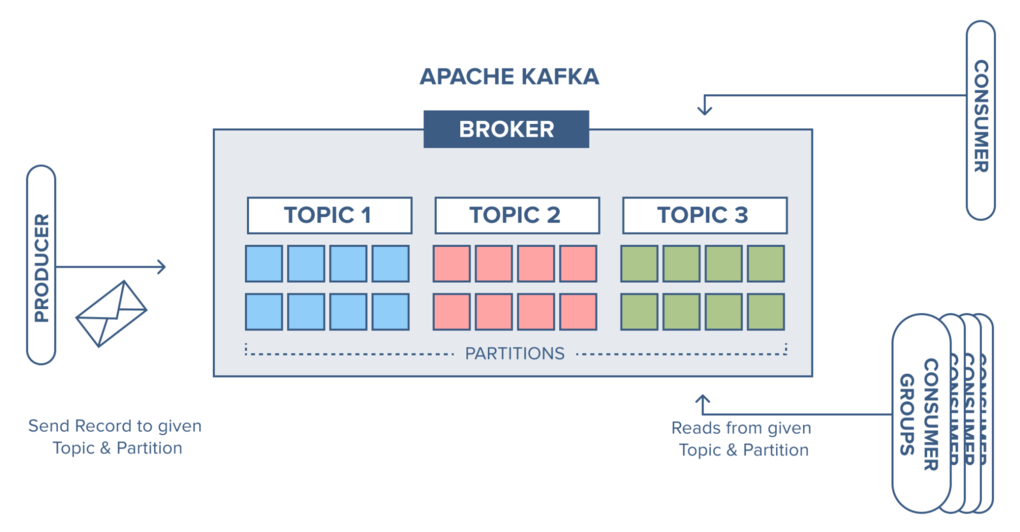

Kafka uses a message broker system that can sequentially and incrementally process a massive inflow of continuous data streams. The source systems, called the Producers, can send multiple streams of data to Kafka brokers. And the target systems, called Consumers, can read and process the data from the brokers. The data isn’t limited to any single destination; multiple consumers can read the same data present in the Kafka broker.

Here are some of Apache Kafka’s key features:

- Scalability: Apache Kafka is massively scalable since it allows data distribution across multiple servers. It can be scaled quickly without any downtime.

- Fault Tolerance: Since Kafka is a distributed system with several nodes running together to serve the cluster, it’s resistant to any cluster node’s or machine’s failure.

- Durability: The Kafka system persists the messages on the disks, which provides an intra-cluster replication. This helps in building a highly durable messaging system.

- High Performance: Since Kafka decouples the data streams, it can process messages at a very high speed, with processing rates exceeding 100k/second. It maintains stable performance even with terabytes of data loads.

Learn more: What is a Kafka Data Pipeline?

Why Snowflake is Ideal for Centralizing Kafka Data Streams

Snowflake is a fully-managed cloud-based data warehousing platform. It uses cloud infrastructures like Azure, AWS, or GCP to manage big data for analytics. Snowflake uses the ANSI SQL protocol that supports fully structured and semi-structured data formats, like XML, Parquet, and JSON. You can perform SQL queries on your Snowflake data to manage data and generate insights.

Let’s look at some of the key Snowflake features to understand why it’s an ideal choice for a centralized data warehouse:

- Centralized Repository: Snowflake consolidates different types of data from various sources into a single, unified repository. It eliminates the need for maintaining separate databases or multiple data silos by providing a centralized storage environment.

- Standard and Extended SQL Support: Snowflake supports ANSI SQL and advanced SQL functionality like lateral view, merge, statistical functions, etc.

- Support for Semi-Structured Data: Snowflake supports semi-structured data ingestion in a variety of formats like JSON, Avro, XML, Parquet, etc.

- Fail-Safe: Snowflake’s fail-safe feature ensures historical data is protected in the event of any disk or hardware failure. It provides 7-day fail-safe protection of data to ensure data recovery.

Methods for Connecting Kafka to Snowflake

To transform your Kafka streams into Snowflake tables, you can use either the Kafka to Snowflake connector or SaaS alternatives.

Method #1: Using Snowflake’s Kafka Connector

Snowflake provides a Kafka connector, which is an Apache Kafka Connect plugin, facilitating the Kafka to Snowflake data transfer. Kafka Connect is a framework that connects Kafka to external systems for reliable and scalable data streaming. You can use Snowflake’s Kafka connector to ingest data from one or more Kafka topics to a Snowflake table. Currently, there are two versions of this connector—a Confluent version and an open-source version.

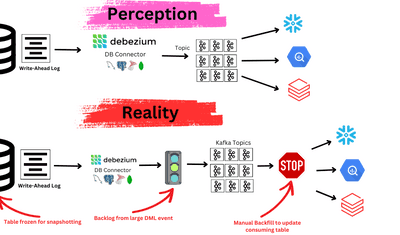

The Kafka to Snowflake connector allows you to stay within the Snowflake ecosystem and prevents the need for any external tools for data migration. It uses Snowpipe or Snowpipe Streaming API to ingest your Kafka data into Snowflake tables.

Snowflake CDC (Change Data Capture) is an essential aspect of this connector. It enables real-time data updates and synchronization between Kafka and Snowflake, ensuring your data is always up to date.

It sounds very promising. But how does it work? Before you use the Kafka to Snowflake connector, here’s a list of the prerequisites:

- A Snowflake account with Read-Write access to the tables, schema, and database.

- A Confluent Kafka or Apache Kafka account.

- Installed Apache Kafka or Confluent connectors.

The Kafka connector will subscribe to one or more Kafka topics based on the Kafka configuration file information. You can also use the Confluent command line to provide the configuration information.

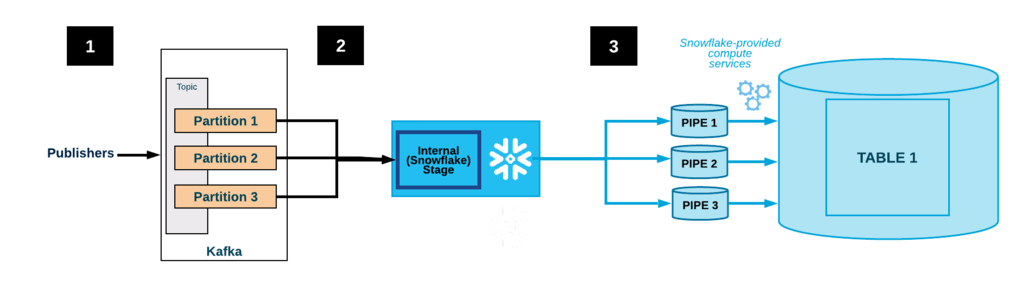

The Kafka to Snowflake connector creates the following objects for each topic:

- An internal stage that temporarily stores each topic’s data files.

- A pipeline to ingest each topic partition’s data files.

- A table for each topic.

Let’s say one or more applications publish Avro or JSON records to a Kafka cluster. Kafka will split these records into topic partitions. When you use the Kafka connector, it will buffer the messages from the Kafka topics. Upon reaching a threshold time, memory, or a number of messages, the connector writes the messages into an internal, temporary file. The connector then triggers Snowpipe to ingest the temporary file to load into the warehouse. Meanwhile, the connector keeps monitoring Snowpipe. After confirming that the data is loaded into the table, the connector deletes each file in the internal stage.

Method #2: SaaS Alternatives for Kafka to Snowflake Integration

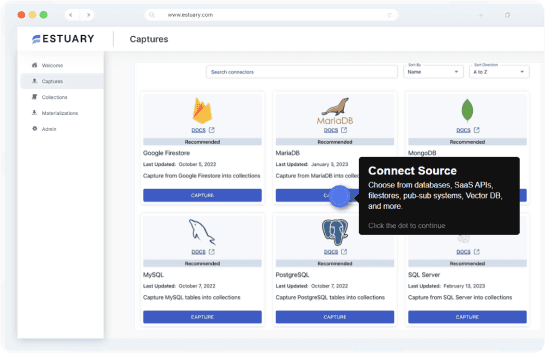

If you prefer a more user-friendly and less manual approach, SaaS ETL tools like Estuary Flow are excellent alternatives. These tools help automate data migration and provide real-time data pipelines with minimal setup.

With Estuary Flow, you can create real-time data pipelines at scale. Thanks to Flow’s built-in connectors, you can establish a connection between two platforms with just a few clicks.

Let’s look at how you can use Estuary Flow to simplify the process of sending data from Kafka to Snowflake:

Step 1: Log in or Register

- To start using Flow, you can register for a free account. However, if you already have one, then log in to your Estuary account.

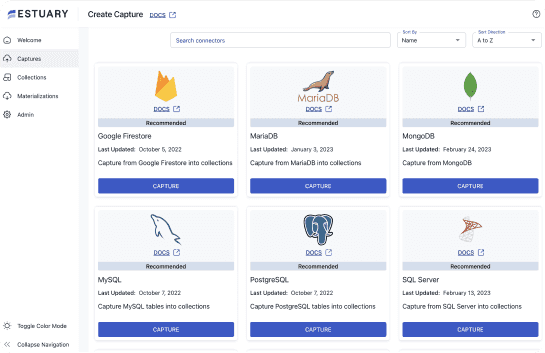

Step 2: Create a Capture

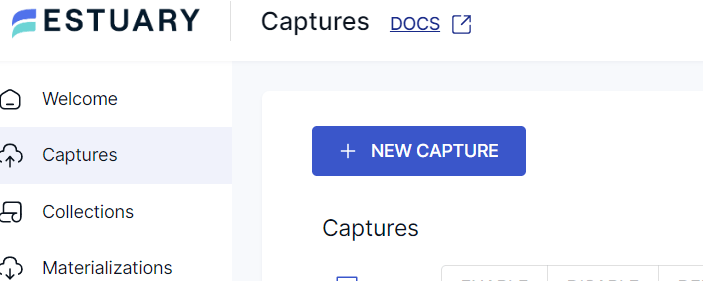

- On the left-side pane of the Estuary dashboard, click on Captures.

- On the Captures page, click on the New Capture button.

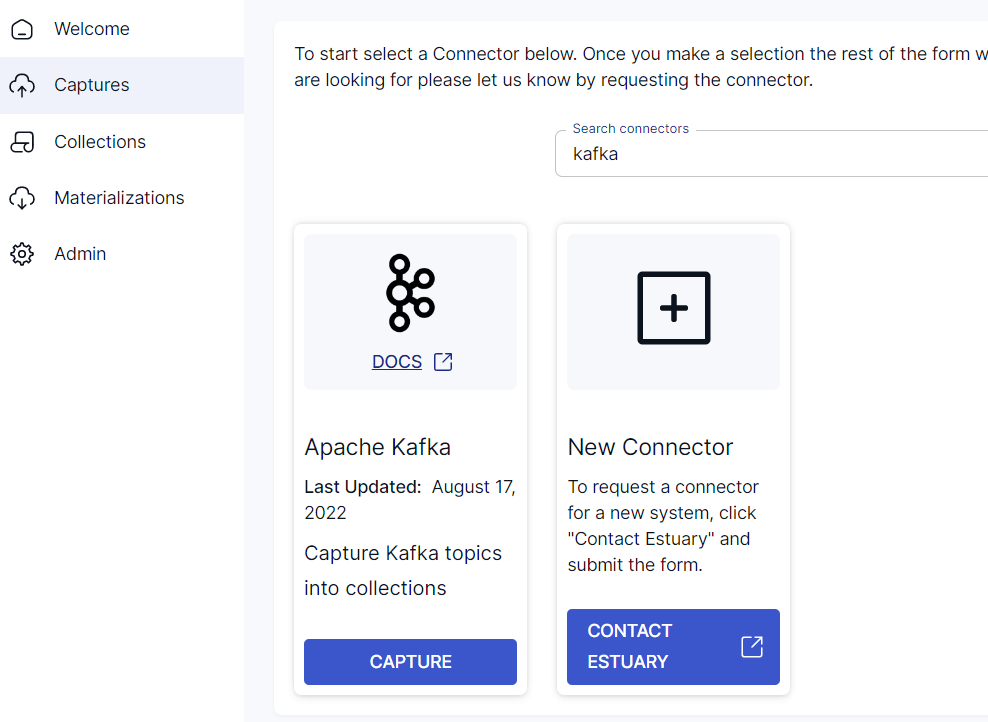

- Since you’re sourcing data from Apache Kafka, this will form the source endpoint of the data pipeline. Enter Kafka in the Search Connectors box or scroll down to find the connector. Now, click on the Capture button.

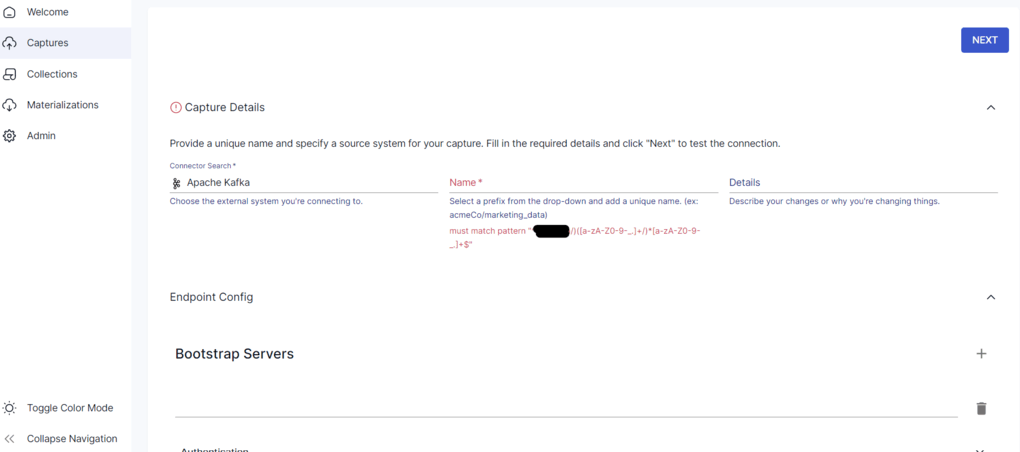

- You’ll be navigated to the Apache Kafka capture page. Within the Endpoint Config section, provide the details of the bootstrap servers. You can select a SASL authentication mechanism of your choice, like PLAIN, SHA-256, or SHA-512, and fill in the details of the same. Once you’re done providing the details, click on the Next button. Then, click on Save and Publish.

Step 3: Create a Materialization

To connect Flow to Snowflake, you’ll need a user role with appropriate permissions to your Snowflake schema, database, and warehouse. You can accomplish this by running this quick script.

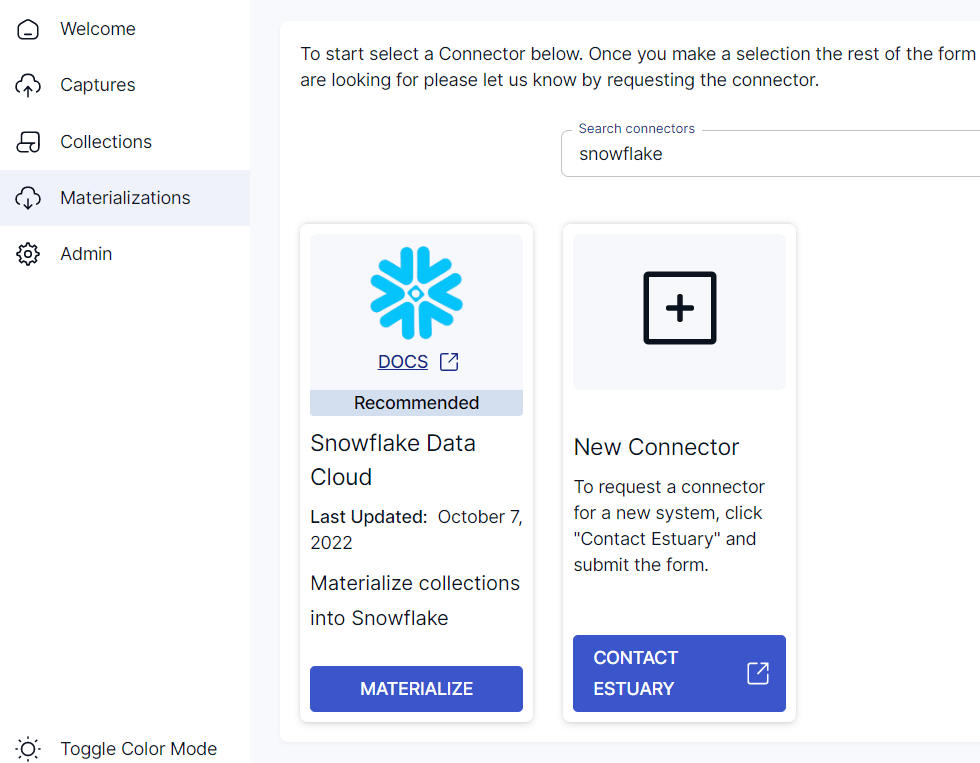

- Navigate back to the Estuary dashboard. Click on Materializations on the left-side pane of the dashboard. Once you’re on the Materializations page, click on the New Materialization button.

- Since your destination database is Snowflake, it forms the other end of the data pipeline. Enter Snowflake in Search Connectors or scroll down to find it. Click on the Materialize button.

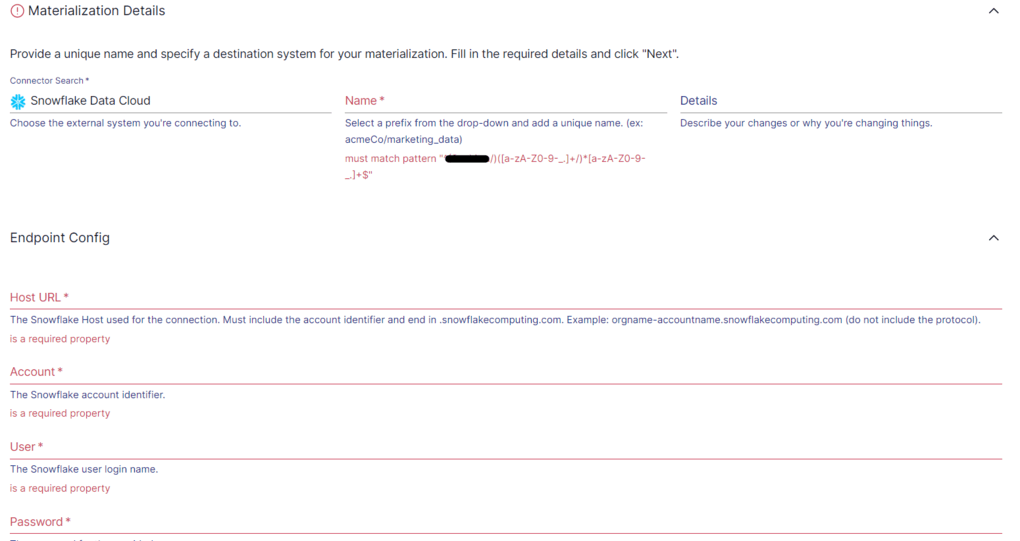

- You’ll be redirected to the Snowflake materialization connector page. Fill in the required fields, including a unique name for your materialization. You also need to provide the Endpoint Config details like user login name, password, Host URL, Account Identifier, SQL Database, and Schema details. Eventually, you can choose from one or more collections to materialize. Once you’ve filled in the required details, click on Next. Then, click on Save and Publish.

The Estuary Snowflake integration process writes data changes to a Snowflake staging table and immediately applies those changes to the destination table.

Why Choose Estuary Flow?

- Real-Time Data Integration: Supports continuous data streaming with minimal latency.

- Ease of Use: Requires no coding, with a straightforward user interface.

- Scalability: Handles large-scale data transfers efficiently.

- Broad Connectivity: Connects easily with various data sources and destinations.

If you’d like more detailed instructions on using the Estuary Flow, here’s a list of the documentation to help out:

Conclusion

Both the Kafka to Snowflake connector and SaaS alternatives like Estuary Flow offer effective solutions for integrating Kafka data streams with Snowflake. While the Kafka connector provides a more traditional, in-depth approach with Snowflake’s ecosystem, Estuary Flow simplifies the process with automated real-time pipelines, reducing the need for manual configuration and technical expertise.

By choosing the right method for your needs, you can ensure a seamless and efficient Kafka to Snowflake integration, enabling real-time data insights.

Get started with Estuary Flow today - Register for Flow here to start for free

Related Guides:

Author

Popular Articles